You should also avoid using keywords like row as variable names. Note that the language name is an identifier, do not use single quotes around it. So you need to commit after running SELECT LoopThroughTable() if you have disabled auto commit in your SQL client. PostgreSQL provides you with a special type called REFCURSOR to declare a cursor variable. The call to the function runs in the context of the calling transaction. So if I execute the UPDATE query after the loop has finished, will that commit the changes to the table? %E3%81%A7FOR-IN(CURSOR)-LOOP%E3%82%92%E4%BD%BF%E7%94%A8%E3%81%99%E3%82%8B%E6%96%B9%E6%B3%95-02.jpg)

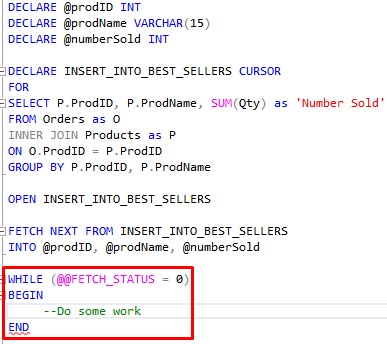

Note that you have to add a where condition on the primary key to the update statement otherwise you would update all rows for each iteration of the loop.Ī slightly more efficient solution is to use a cursor, and then do the update using where current of CREATE OR REPLACE FUNCTION LoopThroughTable() Where pk_column = t_row.pk_column -<<< !!! important !!! But I would try to avoid all of these constructions, most of the time you dont need them to get your stuff done. You could use a recursive CTE or generateseries(), but I dont think that would be faster than a loop/cursor. You need to use an UPDATE inside the loop: CREATE OR REPLACE FUNCTION LoopThroughTable()įOR t_row in SELECT * FROM the_table LOOP Cursor behaviour in SQL Server and PostgreSQL is very different. If you want to update the data you need an update statement: You should really find a way to avoid that.Īll your function is doing is to change the value of the column value in memory - you are just modifying the contents of a variable. I will turn the columns into arrays and feed them into the function and try using query parallelism, too.Doing updates row-by-row in a loop is almost always a bad idea and will be extremely slow and won't scale. (partition by loggerid order by loggerid, datecon, timecon)) as distance_interval You are also retrieving the values incorrectly from the cursor. ST_Distance(gcs_geom::geography, lag(gcs_geom::geography) over So even if your update in the loop would find a row, it would update it to NULL. Lag(datecon + timecon) over (partition by loggerid order by loggerid, datecon, timecon) as prev_datetime, Cursors are typically used within applications that. Time_interval_seconds = (extract(EPOCH from (datecon + timecon) - subquery.prev_datetime)),Ĭalculated_speed = subquery.distance_interval/(extract(EPOCH from (datecon + timecon) - subquery.prev_datetime)) A SQL cursor in PostgreSQL is a read-only pointer to a fully executed SELECT statements result set. Set time_interval = (datecon + timecon) - subquery.prev_datetime,ĭistance_interval = subquery.distance_interval, When t_row.after_bat_off = 1 or t_row.first_point = 1 Select "id", loggerid, datecon, timecon, gcs_geom, after_bat_off, first_point from mytable create or replace function speed_cal_cutoff() How can I optimize the code for better performance?Ĭombining all UPDATEs into one and then DELETE on table with 10k records reduces the execution time by 2 minutes. Set calculated_speed = gcs_distance/interval_secondsĭelete from mytable where calculated_speed > 41.6667 %E3%81%A7FOR-IN(CURSOR)-LOOP%E3%82%92%E4%BD%BF%E7%94%A8%E3%81%99%E3%82%8B%E6%96%B9%E6%B3%95-02.jpg)

Set interval_seconds = (extract(EPOCH from time_interval)) Lag(loggerid) over (partition by loggerid order by datecon, timecon) as prev_loggerid are often 50 to 100 times faster than loops with cursors. Set-based UPDATE commands (UPDATE Table SET. Lag(concat(datecon|| ' ' ||timecon)::timestamp) over (partition by loggerid order by loggerid, datecon, timecon) as prev_datetimeĪnd mytable.loggerid = subquery.prev_loggeridįrom (select "id", ST_Distance(gcs_geom::geography, lag(gcs_geom::geography) over (partition by loggerid order by loggerid, datecon, timecon asc)) as gcs_distance, PostgreSQL FOR Loops VS MS-SQL Cursors : r/PostgreSQL by -neilius- PostgreSQL FOR Loops VS MS-SQL Cursors I avoid cursors in MS-SQL because of performance and concurrency issues. Lag(loggerid) over (partition by loggerid order by datecon, timecon) as prev_loggerid, Set time_interval = (concat(datecon|| ' ' ||timecon)::timestamp) - prev_datetime Interval_seconds, calculated_speed from mytable

Indexes, work_mem, fillfactor and vacuum full do not make significant changes. As a proof of concept, I am trying to simply iterate over the table, and set the value of the resid column to 1.0 in every row. The sample table has around 500k records, but takes hours to execute and still do not finish. I then average the values of these points (this average is known as a residual), and add this value to the table in the resid column. I am creating a for-loop function to update multiple columns (time, distance and speed) calculated from values of the current row and previous row, and delete the row whose value from the updated column (speed) exceeds the cutoff value.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed